There’s a video from the show below and if you’re interested in the process of sharing live VJing content across computers and operating systems read the entire post.

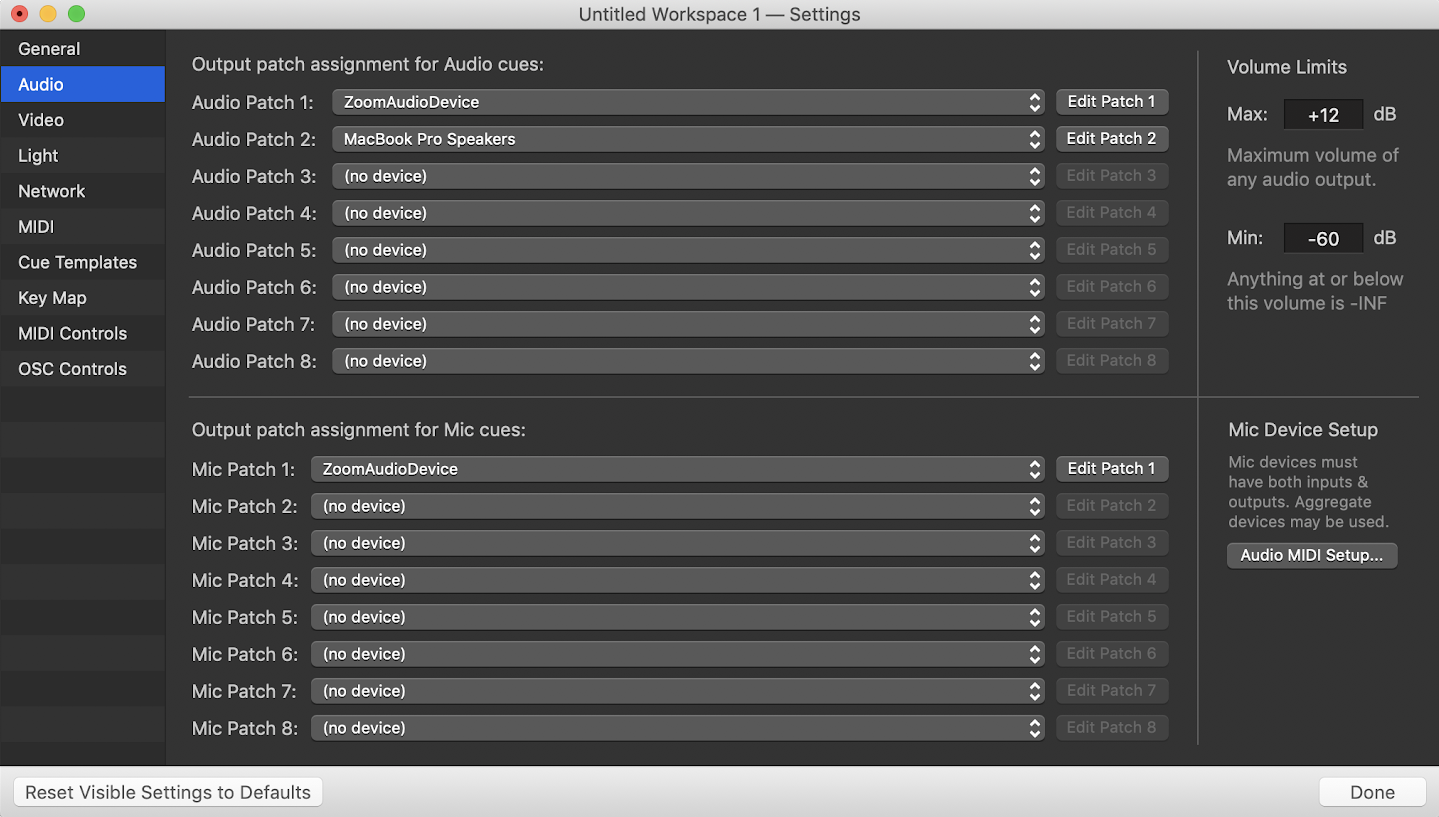

MADMAPPER NO SOUND PC

It wasn’t my first time using a multiple projector/computer setup, but adding a PC and Live coding VJ into the mix needed a new workflow. Nonetheless, I'm happy with the composition and am glad to support a talented artist with techniques developed over the course of this semester.I thought it would be a good idea to document the process used for Cosmic Sound’s Altared event w/ Bary Center, J Butler, To Sleep At Night, Dilettante. However, it was challenging to make changes to the timing of an individual cue because it caused a potential need for change in the timing of cues that fell after the initial changed cue. I learned a lot about using Mapper as an animation tool and have become pretty familiar with the ins and outs of cueing when making changes to the built in media as well as to the geometry of surfaces.Īs far as the show control, I am not sure if the array of auto-follows in QLab was the most intelligent way to time each cue's action. I feel like I was able to augment the sonic achievement Trevor made with such a complex composition by creating what I think is a pretty complex MadMapper visualization. This image displays some of the OSC controls as well as the way the show control operates when the song has been played/the MadMapper composition has began. I used different media and applied different effects/blend modes to those surfaces or groups of surfaces to achieve the goal of creating different elements for each movement which seemed to "map" or follow the musical phrases or particular musical instruments. Over the course of the composition, I created nearly 300 quads/triangles/circles/surfaces in MadMapper. Splitting the different compositional movements into individual pieces (defined by particular musical phrases or the predomination of a particular instrument, for example), I began to design visual compositions and record to Scenes in MadMapper. We talked through some of the ideas he had for visualization and some of the things which came to mind when he listened to/arranged/wrote the music.Īfter I walked him through the many options for generative/modular/parametric media native to MadMapper, I moved onto designing and programming the visualization. He and I sat down and listened through an initial mix of the composition and divided it into 6 movements which had a distinct sonic and emotional character, and some of which were mostly digital or alternatively mostly analog.

MADMAPPER NO SOUND PRO

He shared a recent composition named Beam for the New Music Ensemble there, and asked me to develop some visuals for it.īeam is an extremely complex composition which contains a great deal of synthesized or electro-acoustic music written and performed by Trevor himself, as well as the analog work of 19 other musicians in the Ensemble - it ended up being over 50 tracks when arranged in Logic Pro and Ableton Live. A good friend of mine and frequent artistic collaborator, Trevor Dean Stewart, is a composing student at Columbia College Chicago.